Notes from AKI & CRRT 2026

I spent the last week of March in San Diego for AKI & CRRT 2026 — the 31st International Conference on Advances in Critical Care Nephrology, held at the Manchester Grand Hyatt from March 29 to April 1. The AITRICS research team had two abstracts accepted; both were selected as Oral Posters, which was the main reason for flying out. My colleague Donghwee Yoon and I traveled together and split the two presentations between us.

Our oral posters

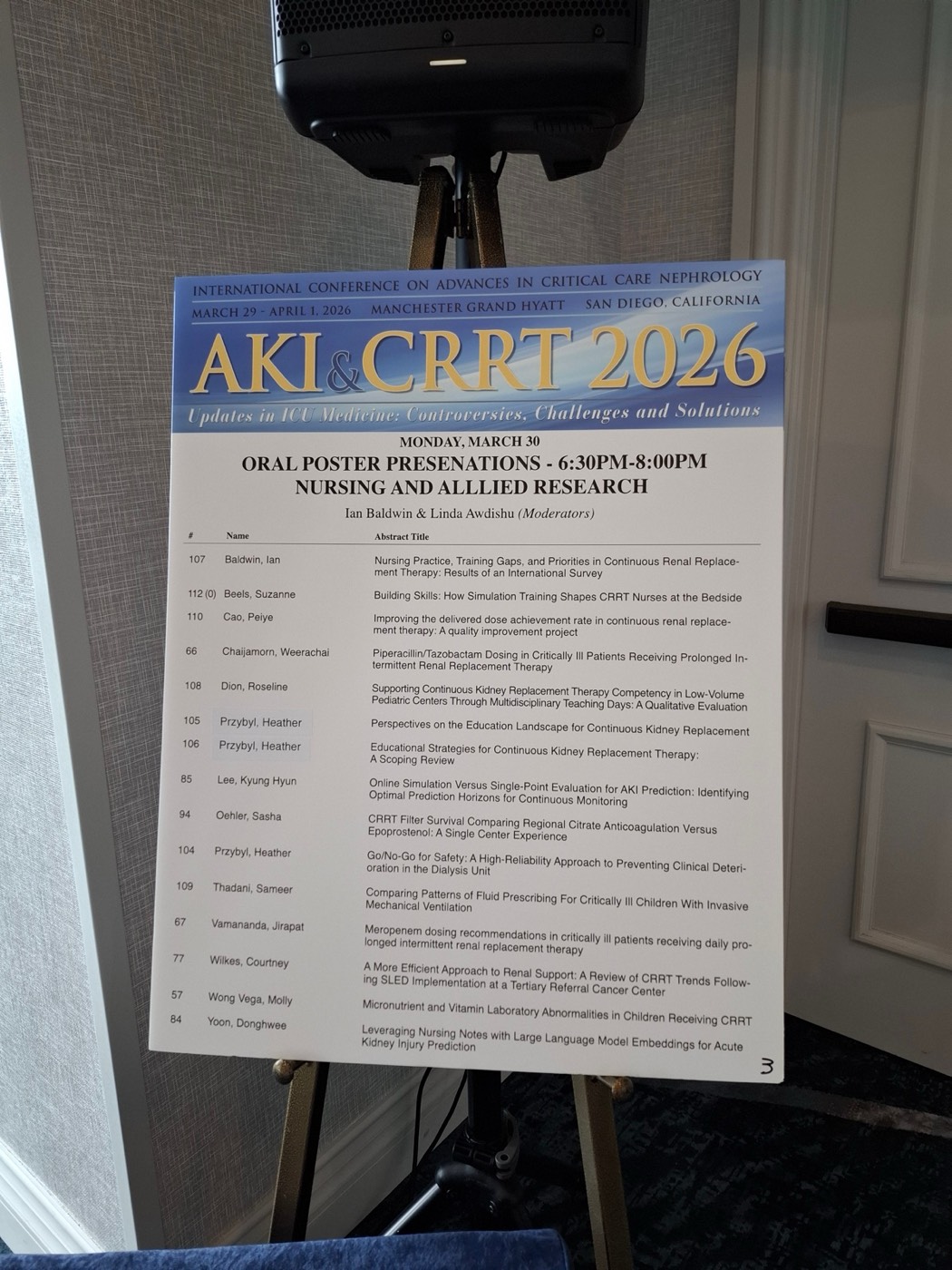

Both talks were part of the Nursing and Allied Research Oral Poster session on Monday, March 30 (6:30–8:00 PM).

Poster #85 — Online Simulation Versus Single-Point Evaluation for AKI Prediction: Identifying Optimal Prediction Horizons for Continuous Monitoring (Kyung Hyun Lee). My talk focused on how we evaluate a time-series AKI prediction model. Conventional evaluation treats each patient as a single-point snapshot, but a deployed model runs continuously and re-predicts as new measurements arrive. We built an online simulation harness that replays the EHR stream the way a bedside system would see it, then measured performance as a function of prediction horizon. The single-point vs. online-simulation comparison shows that the “best” horizon depends heavily on the evaluation protocol, and that the horizon you pick at evaluation time has direct implications for how clinicians should interpret continuous alerts. This work is part of the broader multicenter AKI-prediction manuscript we submitted to npj Digital Medicine.

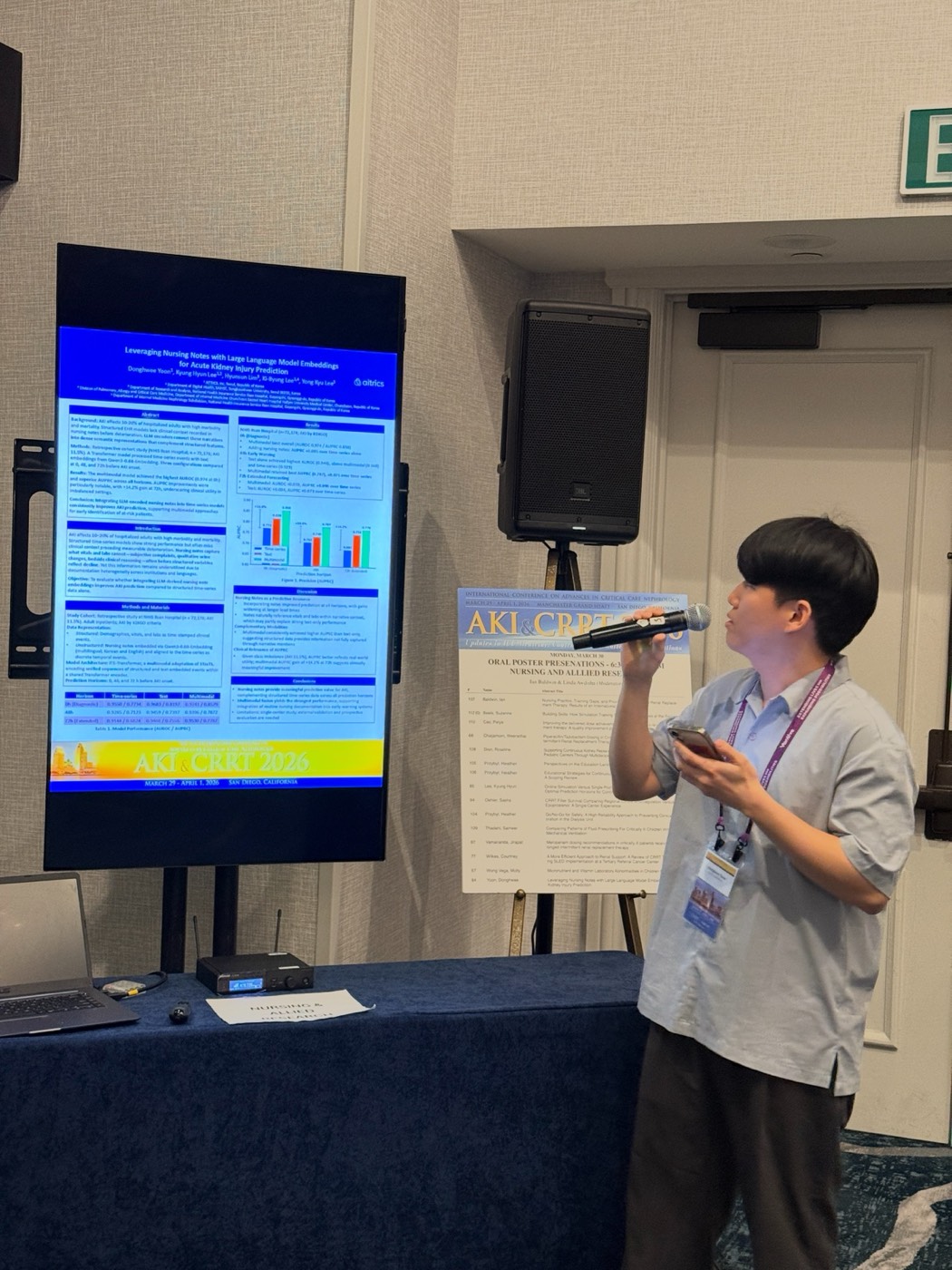

Poster #84 — Leveraging Nursing Notes with Large Language Model Embeddings for Acute Kidney Injury Prediction (Donghwee Yoon). Donghwee’s talk extended the prediction model in a different direction: adding unstructured nursing notes on top of the existing time-series signal. The notes are encoded with LLM embeddings and fused with vitals and labs in a multimodal architecture. The performance lift over a vitals-only baseline was consistent and, more importantly, the gains were largest for the populations where structured data alone is thinnest.

Conference highlights

Beyond our own posters, what stood out to me across the program:

“The Rise of the AI-Era Physician” — Azra Bihorac (Special Lecture)

Framed the current cohort as the last generation of physicians who trained without AI, and argued that the next generation needs to be fluent not just in using models but in their design, validation, and deployment. The practical takeaway for me: when you’re building a clinical prediction system, the education layer and the interface layer aren’t “nice to have” — they’re part of the product. How a clinician reads, trusts, and acts on a score determines whether any of the modeling effort matters downstream.

2026 KDIGO AKI Guidelines — Special Session

The Plenary 4 session walked through the updates across definitions/staging, risk assessment, prevention and management, drug and contrast management, post-AKI follow-up, and pediatric AKI (with speakers including Marlies Ostermann, Rolando Claure-Del Granado, and Alex Zarbock). For those of us working on AKI labeling and prediction, the risk-assessment changes matter most: they bear directly on how we define positive cases for training data and on how we set thresholds for continuous monitoring.

AI-for-AKI work I want to come back to

Three posters in particular I took detailed notes on:

- Poster #42 — Large Language Model-Based Real-time Acute Kidney Injury Prediction with Explainable Risk Attribution (Lingyi Xu). A multicenter development/validation study that uses LLMs for real-time AKI prediction and attaches per-case risk attribution. Resonates with our multimodal direction; the explainability framing especially stuck with me as a clinical-adoption lever.

- Poster #59 — Clinical Impact of AI-Predicted Acute Kidney Injury Depends on Physician Intervention: Results From a Randomized ICU Trial (Chun-Te Huang). An RCT showing that AI alerts alone don’t move outcomes — what moves them is alerts paired with a structured physician response. A useful grounding for anyone tempted to treat a prediction system as a complete clinical intervention.

- Poster #58 — Machine Learning-Based Prediction of Renal Recovery in CKRT: Added Value of 72-Hour Longitudinal Trajectories (Daseul Huh). Uses 72-hour longitudinal trajectories (MAP, vasopressor score, urine output) to predict renal recovery. Nice complement to our own online-simulation work: another piece of evidence that trajectory-aware evaluation beats single-point snapshots on clinically meaningful outcomes.

Takeaways

Two things I’m leaving with:

- Evaluation protocol is part of the model. Online simulation gave us a very different (and, I’d argue, more honest) picture of “best prediction horizon” than single-point evaluation. I suspect this is going to be true for most continuously deployed clinical models, not just AKI.

- AI for AKI is now crowded — in a good way. LLM-based attribution, trajectory-aware prediction, RCT-grade intervention studies — none of these would have been plausible conference themes a few years ago. The bar has clearly moved, and it’s moved toward the right things (clinical impact, deployment realism, explainability).

Thanks to the AKI & CRRT organizers for a well-run meeting, and to everyone who stopped by our posters.